Assessment security refers to the “measures taken to harden assessments against attempts to cheat” (Dawson, 2021, p. 2). It encompasses strategies and design choices intended to protect the integrity of assessment tasks by ensuring that the work submitted is:

- the student's own

- completed under the conditions intended

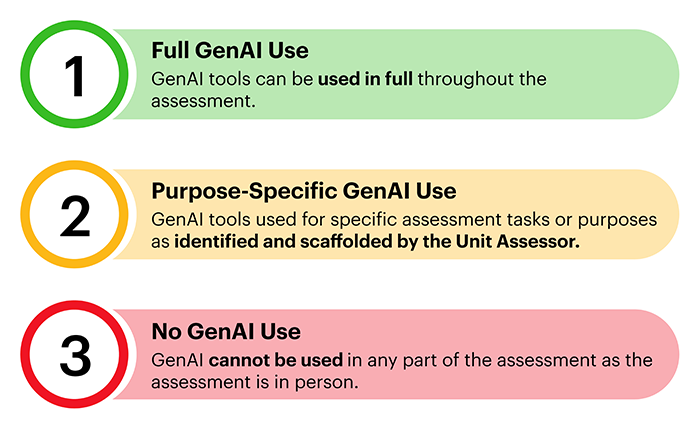

- free from unauthorised assistance — including the use of generative artificial intelligence (gen AI) and contract cheating services.

Assessment security is distinct from institutional activities designed to highlight the value of academic integrity and otherwise deter undesirable behaviours.

The importance of assessment security extends beyond individual courses or student academic performance. It underpins the social license of educational institutions — the public’s trust that qualifications represent genuine achievement and that graduates are competent in their fields. Without credible assessments, the value of a credential is diminished, damaging both institutional reputation and societal confidence in higher education outcomes.

It is increasingly the case that institutions are adopting a program-level view of assessments, rather than limiting their view to each individual subject. Approaches such as Programmatic Assessment, the “2-lane” approach, and others seek to achieve a balance between institutional logistics, assessment for learning and assessment of learning.

It is generally advisable that assessments with low to medium security (see table below) do not carry a high weighting in terms of marks or progression to the next stage of a student’s program, but rather are used principally as formative assessment rather than as summative. In this context, accurately evaluating the security level of various assessment types is important.

The table below categorises common assessment formats by their relative vulnerability to academic misconduct.

Assessment types and security levels

| Assessment type |

Assessment security level |

Explanation |

| In-person supervised written exams |

High |

Conducted under invigilation; low risk of third-party help or collusion. No LMS involvement. Seating arrangements can reduce potential peer signaling. |

| Oral exams / viva voce |

High |

Real-time interaction with assessors; impersonation is very difficult. Not vulnerable to LMS threats. |

| In-class written tasks |

High |

Live conditions reduce risk of collusion or external help. Minimal LMS exposure, although digital in-class tasks can be conducted by third-parties. |

| Simulations / role plays (in-person) |

High |

Collusion is difficult due to live, interactive nature. Tasks typically require spontaneous performance. |

| Viva voces |

High |

Live conditions reduce risk of collusion or external help. Unlike presentations, all content or answers cannot be pre-prepared. |

| Practical / lab-based assessments |

Medium to high |

Performance-based tasks limit outsourcing. But collusion can occur via shared work or peer support unless roles are clearly defined. |

| Presentations (in-person) |

Medium to high |

Harder to collude during delivery, but prep materials (slides, scripts) can be developed by others. |

| Online proctored exams |

Medium |

LMS credentials can be shared with third-parties; proctoring may not detect collusion (e.g. messaging with peers during exams). |

| Group projects |

Low to medium |

Intended collaboration can mask inappropriate collusion. One student may do all work or external help can be used. LMS tools may hide individual contributions. Students often have a clear understanding of what work their group members have undertaken. |

| Presentations (recorded or online) |

Low to medium |

Higher collusion risk — peers may co-develop or edit content. Scripts or full videos can be produced externally and uploaded, increasing risk of deepfakes. |

| Peer review tasks |

Low to medium |

Students may coordinate reviews with friends, give favourable feedback, or copy others’ responses. Online platforms enable manipulation. |

| Take-home exams (time-limited) |

Low |

Students can collaborate informally or share answers. LMS access can be shared to allow real-time assistance. |

| Online quizzes (untimed/open-book) |

Low |

High collusion risk — students may complete quizzes together or share answers. Easy to outsource via shared LMS access. |

| Essays / research papers |

Low |

High risk of contract cheating and peer collaboration. Students may exchange drafts or copy structure/arguments. LMS submission portals may be accessed by others. |

| Portfolios / reflective journals |

Low |

Often completed individually, but prompts and reflections can be shared or copied between peers. LMS access may be used to upload third-party or peer-written content. |

| Discussion board posts / participation |

Low |

Very high risk of collusion — students often copy or paraphrase each other’s posts. LMS accounts may be shared with others to post on behalf of students. |

| Capstone projects / theses |

Low |

Students may collaborate inappropriately on research or writing. Risk of peer editing or contract cheating. Third-party LMS access may be used to submit externally produced work. |

Enhancing assessment security

Various actions can be taken to enhance assessment security by making academic misconduct harder to engage in or easier to detect. Research into academic misconduct shows that some strategies that academics intuitively believe may enhance assessment security may not work, while other strategies are more effective. At the same time, avoiding low security assessments is recommended.

Dawson (2021) noted three low-security assessments that are easily avoidable “assessment design mistakes”:

- summative unsupervised online tests and quizzes

- recycled assignments from previous teaching periods

- take-home assignments with a single correct answer.

It has been suggested that constraining students’ time to work on assignments may make it harder for them to engage in contract cheating because it may be difficult to find someone to complete the assignment at short notice. Evidence shows, however, that making turnaround times shorter with the aim of limiting students’ ability to outsource assignments makes cheating more likely.

Surveys of students indicate that they are more likely to outsource assignments when under time pressure (Bretag et al., 2019) and analysis of contract cheating providers shows repeated claims or producing assignments at short notice (Wallace & Newton, 2014). These short turnaround times include rapidly providing answers to questions posted in online quizzes (Lancaster & Cotarlan, 2021).

More viable options to enhance assessment security include:

- using text-matching software to aid in detecting plagiarism

- training markers to recognise signs of academic misconduct

- monitoring file-sharing sites for uploaded course information and assessments

- using platforms that monitor students’ access to assessments and record version histories of their work

- monitoring students’ engagement with learning management systems

- training invigilators of exams, and academics who supervise in-class tests, to recognise and respond appropriately to academic misconduct.

References

Bretag, T., Harper, R., Burton, M., Ellis, C., Newton, P., Rozenberg, P., ... & Van Haeringen, K. (2019). Contract cheating: A survey of Australian university students. Studies in Higher Education, 44(11), 1837-1856.

Dawson, P. (2020). Defending Assessment Security in a Digital World: Preventing E-Cheating and Supporting Academic Integrity in Higher Education (1st ed.). Routledge.

Lancaster, T., & Cotarlan, C. (2021). Contract cheating by STEM students through a file sharing website: a Covid-19 pandemic perspective. International Journal for Educational Integrity, 17(1), 3.

Schuwirth, L. W. T., & Van der Vleuten, C. P. M. (2011). Programmatic assessment: From assessment of learning to assessment for learning. Medical Teacher, 33(6), 478–485.

The “2-lane” approach, Adam Bridgeman, Danny Liu and Ruth Weeks, University of Sydney.

Wallace, M. J., & Newton, P. M. (2014). Turnaround time and market capacity in contract cheating. Educational Studies, 40(2), 233-236.