Authors: Professor Ruth Greenaway, Dr Zachery Quince, Dr Joanne Munn, Southern Cross University

Focus area: Assessment design

Generative artificial intelligence (gen AI) enables students to generate sophisticated academic outputs with minimal effort, challenging traditional assessment methods and raising concerns about academic integrity. Southern Cross University (SCU) has responded to this challenge by developing the Assessment Adaptation Model – Gen AI (AAM-Gen AI), a comprehensive, pedagogically grounded model designed to help educators adapt assessments to be resilient and meaningful in the gen AI era.

Gen AI tools have made traditional assessment vulnerable to misuse, necessitating systemic changes that move beyond reactive policies and detection-based approaches, advocating for proactive, authentic assessment designs that foster deep learning, critical thinking and ethical reasoning.

Authentic assessments, mirroring real-world complexities that require personal engagement, are less susceptible to gen AI misuse and promote transferable graduate skills. SCU’s AAM-Gen AI model arises from this context, aiming to align assessment design with both academic integrity and the evolving digital landscape.

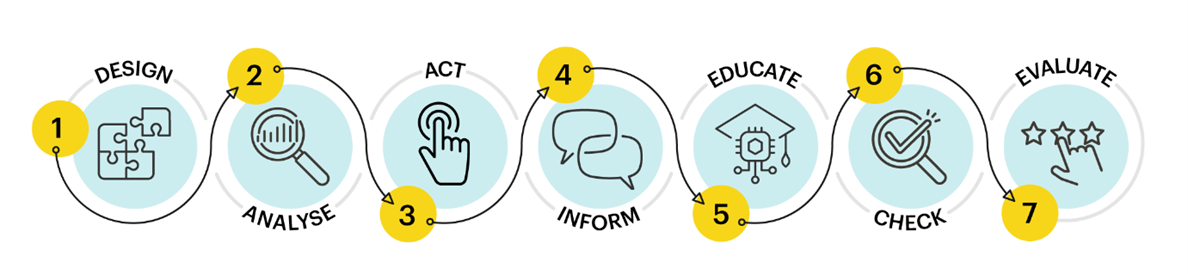

The AAM-Gen AI model consists of seven interrelated components spanning the assessment lifecycle. It promotes a holistic, proactive approach that integrates gen AI considerations into every stage of assessment, encouraging transparent, ethical and capability-building practices rather than punitive measures.

- Design:

Craft assessment tasks that emphasise higher-order thinking, contextual relevance and personal engagement reducing gen AI misuse and enhance learning. - Analyse:

Critically evaluate assessments using a security risk matrix to identify and mitigate vulnerabilities to gen AI exploitation. - Act:

Implement strategic changes like multi-stage tasks using security rating scales to strengthen assessment integrity. - Inform:

Clearly communicate gen AI usage policies to students to support fairness and ethical learning. - Educate:

Develop students’ AI literacy and critical thinking to foster ethical and informed engagement with gen AI tools. - Check:

Verify authenticity through nuanced, evidence-based approaches while promoting a culture of trust and accountability. - Evaluate:

Continuously review and refine assessment practices to ensure alignment with learning goals and responsiveness to gen AI developments.

Key lessons or points for implementation

- Spend time considering current assessment and proactively redesign with gen AI in mind.

- A security risk matrix is a conversation starting point to reconsider assessment design, it is not a definitive measure of assessment security.

Assessment Adaptation Model-Gen AI (AAM-Gen AI)